Monitoring GPU Jobs on the ORC Cluster

Need For GPU Tracking

Once jobs are submitted to the GPU nodes, you can monitor their GPU utilization. This step is essential for accurately requesting the appropriate size and memory of the GPUs. Instead of allocating an entire A100 GPU and utilizing only 10% of its capacity, it is more efficient to allocate 1/7th of the GPU and use it fully. This approach ensures optimal utilization of the cluster by all users, significantly reducing wait times for job execution.

Reteriving the GPU Node

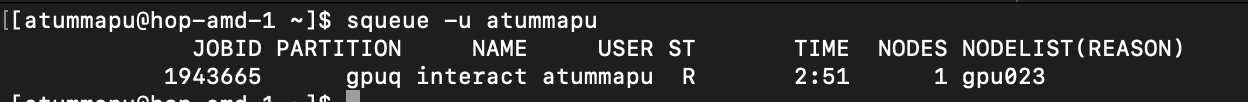

After submitting your job to Slurm, you can use the command provided below to retrieve the GPU node on which your current job is running:

squeue -u <NET_ID>

Replace

Logging onto the GPU Node

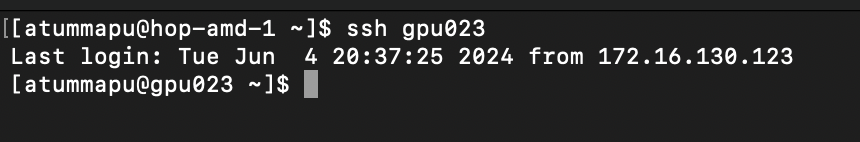

Once you have identified the GPU node where your job is running, you can log in to that specific node using the command provided below:

ssh <GPU_NODE>

Replace

Tracking GPU Utilization

The GPU utilization can be tracked using multiple ways.

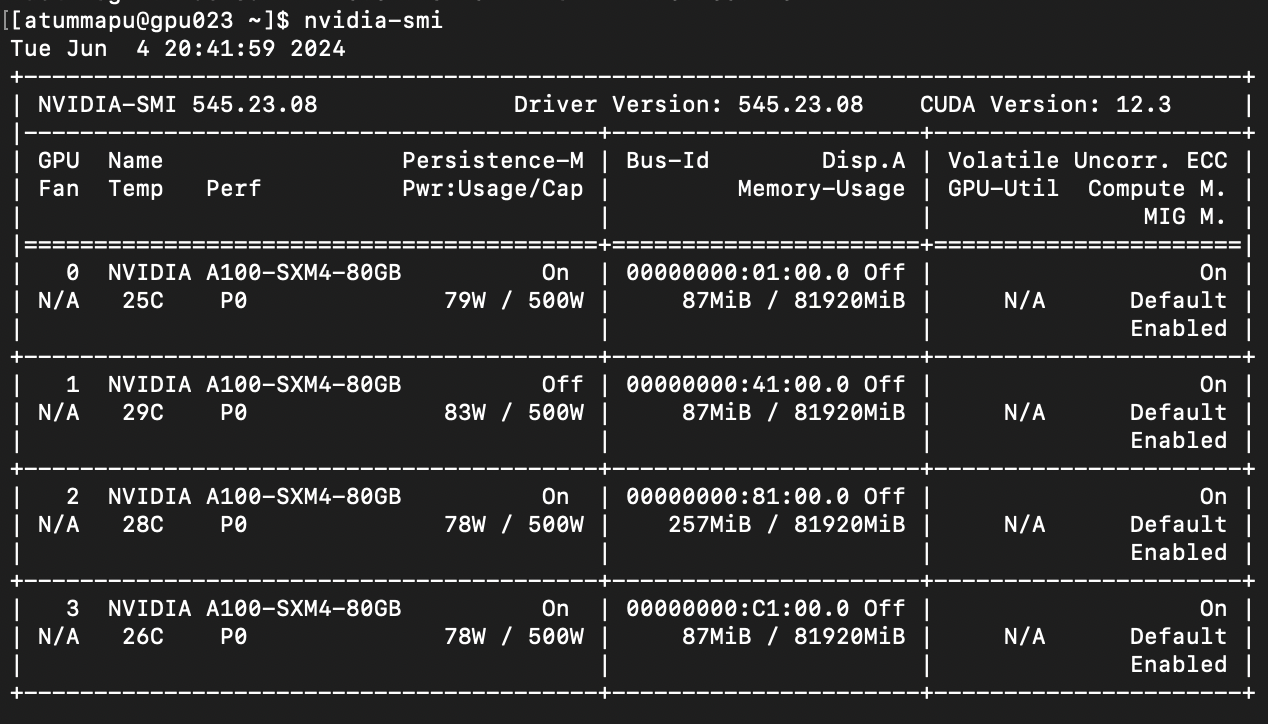

1. nvidia-smi

nvidia-smi (NVIDIA System Management Interface) is a command-line utility that provides monitoring and management capabilities for NVIDIA GPUs. It is included with the NVIDIA driver and can be used on systems running Linux, Windows, and FreeBSD.

nvidia-smi command can be executed in a terminal like provided below.

nvidia-smi

However, the command provided above yields a static output. To view real-time results, use the command below.

watch -n0.1 nvidia-smi

Here 0.1 represent the time interval. You can adjust according to your requirement.

This command will display real-time GPU utilization until it is terminated. You can use CTRL+D to terminate.

Key Fields

- Driver Version: The version of the installed NVIDIA driver.

- CUDA Version: The version of the installed CUDA toolkit.

- GPU Name: The name of the GPU (e.g., A100, H100, V100).

- Fan: The current fan speed as a percentage.

- Temp: The current temperature of the GPU in degrees Celsius.

- Perf: The current performance state of the GPU.

- Pwr Usage/Cap: The current power usage and the maximum power capacity of the GPU.

- Memory-Usage: The amount of GPU memory being used and the total available memory.

- GPU-Util: The percentage of GPU utilization.

- Processes: Lists processes using the GPU, along with their memory usage.

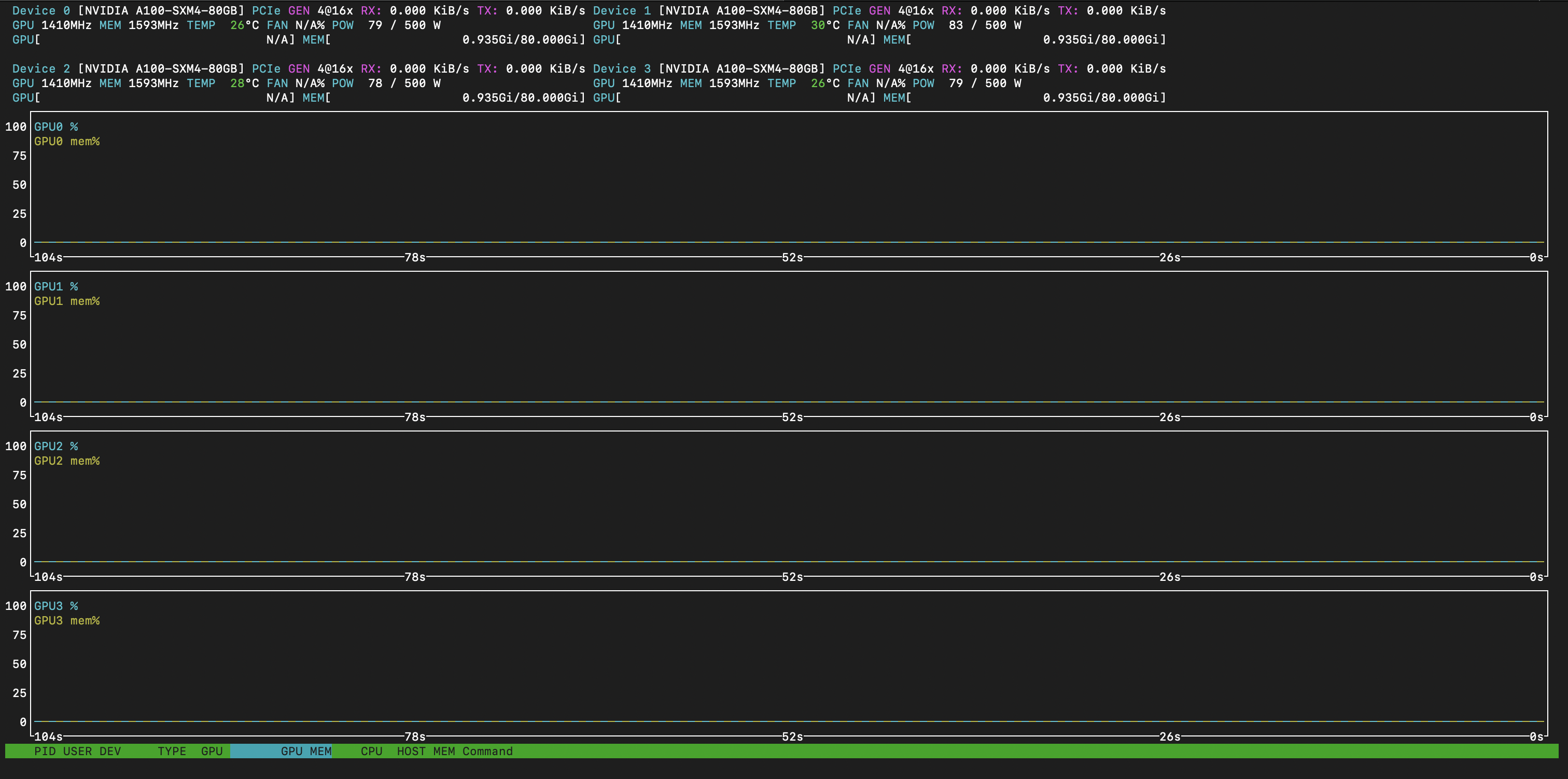

2. nvtop

nvtop (NVIDIA Top) is an open-source command-line utility designed for real-time monitoring of NVIDIA GPUs. It provides a top-like interface that displays essential metrics such as GPU utilization, memory usage, and temperature, allowing users to monitor multiple GPUs simultaneously in a comprehensive and interactive manner.

nvtop command can be executed in a terminal like provided below.

nvtop

Key Fields

- GPU: The identifier of the GPU.

- Temp: The current temperature of the GPU in degrees Celsius.

- Memory-Usage: The amount of GPU memory being used and the total available memory.

- GPU-Util: The percentage of GPU utilization.

- Pwr-Usage/Cap: The current power usage and the maximum power capacity of the GPU.

- Name: The name of the GPU (e.g., A100, H100).

- PID: The process ID of the process using the GPU.

- User: The user owning the process.

- Memory: The amount of memory being used by the process.

- Command: The command or application using the GPU.

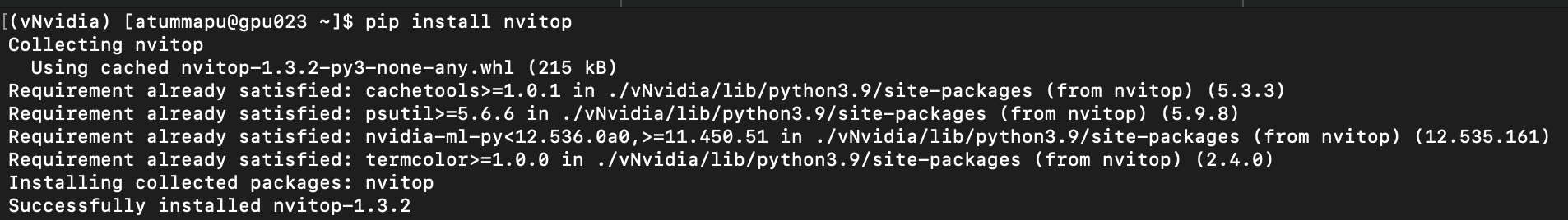

3. nvitop

nvitop is an open-source command-line utility designed for real-time monitoring of NVIDIA GPUs. It offers a top-like interface that displays detailed statistics about GPU utilization, memory usage, temperature, and running processes, providing a comprehensive view of GPU performance and resource usage.

To use the gpustat package, you must first install it in a Python virtual environment (recommended). Detailed instructions for creating a virtual environment can be found here.

First, activate the newly created or existing virtual environment using the command below:

source <VIRTUAL_ENV>/bin/activate

Replace

nvitop can be installed via Python's package manager, pip.

pip install nvitop

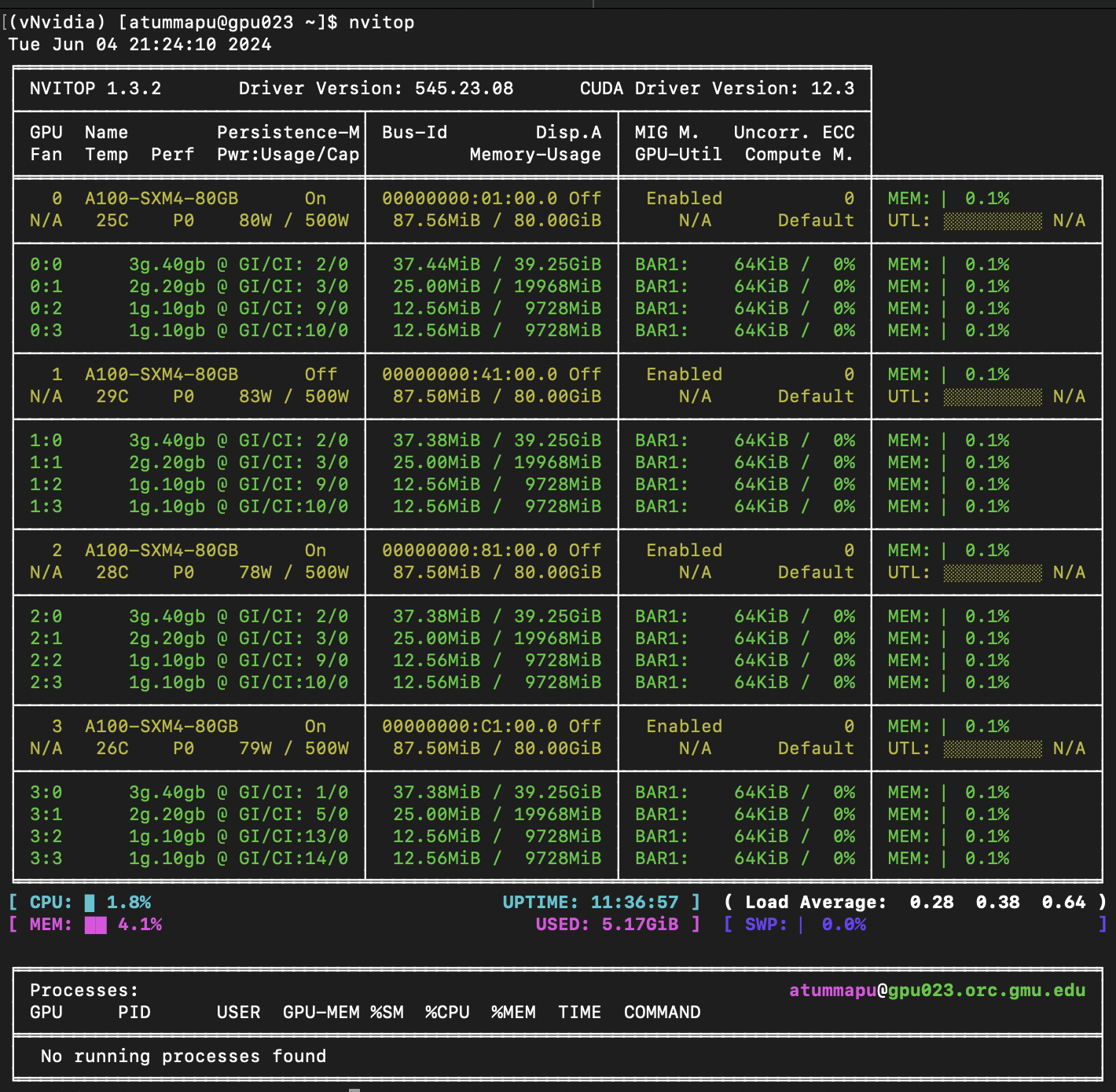

nvitop command can be executed in a terminal like provided below.

nvitop

Key Fields

- GPU: The identifier of the GPU.

- Temp: The current temperature of the GPU in degrees Celsius.

- Memory-Usage: The amount of GPU memory being used and the total available memory.

- GPU-Util: The percentage of GPU utilization.

- Pwr-Usage/Cap: The current power usage and the maximum power capacity of the GPU.

- Name: The name of the GPU (e.g., A100, H100).

- PID: The process ID of the process using the GPU.

- User: The user owning the process.

- Memory: The amount of memory being used by the process.

- Command: The command or application using the GPU.

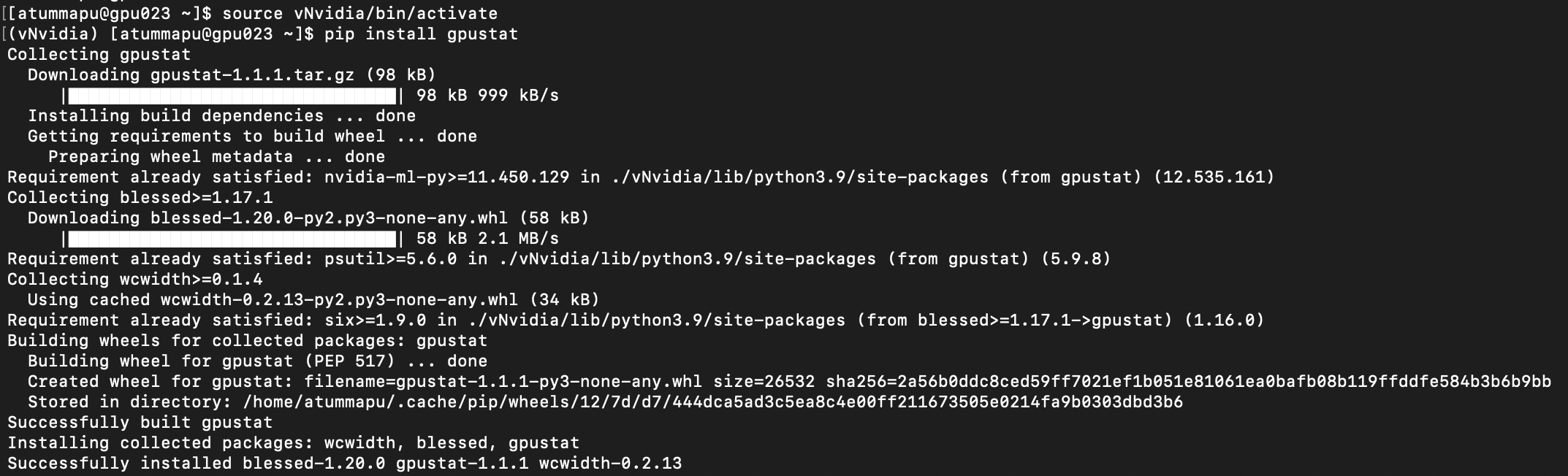

4. gpustat

gpustat is a Python-based command-line utility for real-time monitoring of NVIDIA GPUs. It provides an easy-to-read summary of GPU utilization, memory usage, temperature, and other essential metrics, making it a popular tool among data scientists, researchers, and developers for managing and optimizing GPU resources.

To use the gpustat package, you must first install it in a Python virtual environment (recommended). Detailed instructions for creating a virtual environment can be found here.

First, activate the newly created or existing virtual environment using the command below:

source <VIRTUAL_ENV>/bin/activate

Replace

gpustat can be installed via Python's package manager, pip.

pip install gpustat

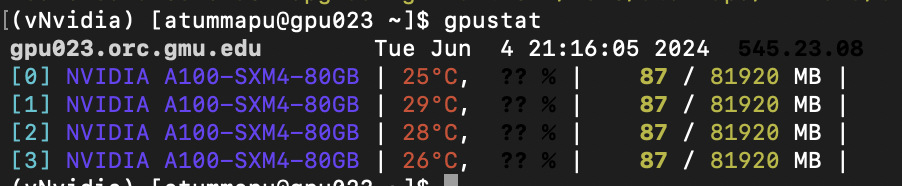

gpustat command can be executed in a terminal like provided below.

gpustat

Key Fields

- GPU Index: The identifier of the GPU.

- GPU Name: The name of the GPU (e.g., GeForce GTX 1080).

- Temperature: The current temperature of the GPU in degrees Celsius.

- GPU Utilization: The percentage of GPU utilization.

- Memory Usage: The amount of GPU memory being used and the total available memory.

- Processes: Lists users and their respective memory usage on the GPU.